Parcourir la source

First CNN model version

100 fichiers modifiés avec 181 ajouts et 0 suppressions

+ 2

- 0

.gitignore

|

||

|

||

|

||

+ 23

- 0

README.md

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

+ 11

- 0

TODO.md

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

+ 103

- 0

classification_cnn_keras.py

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

+ 42

- 0

generate_dataset.py

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

|

||

BIN

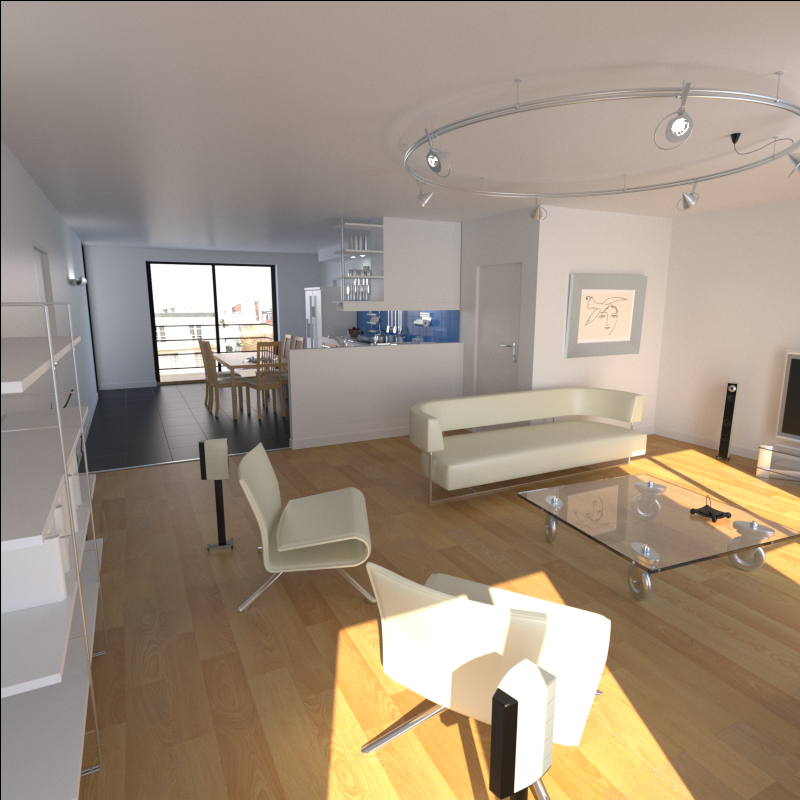

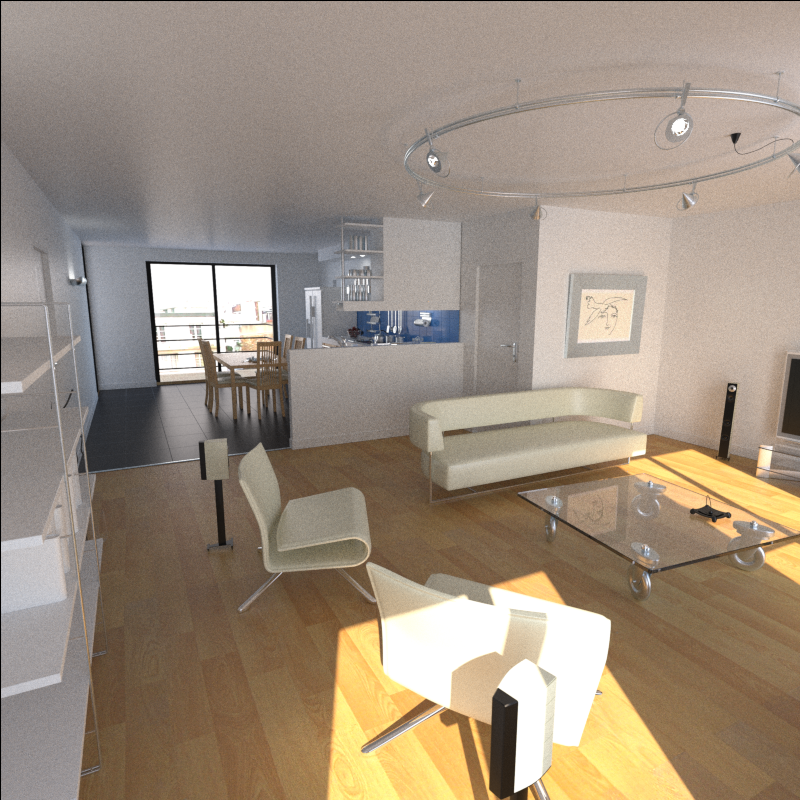

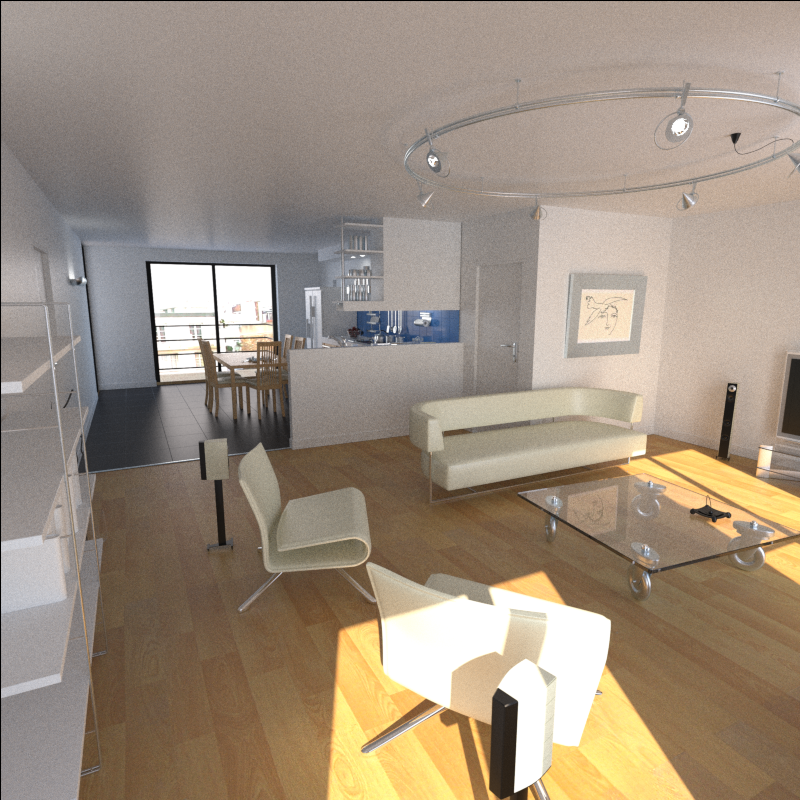

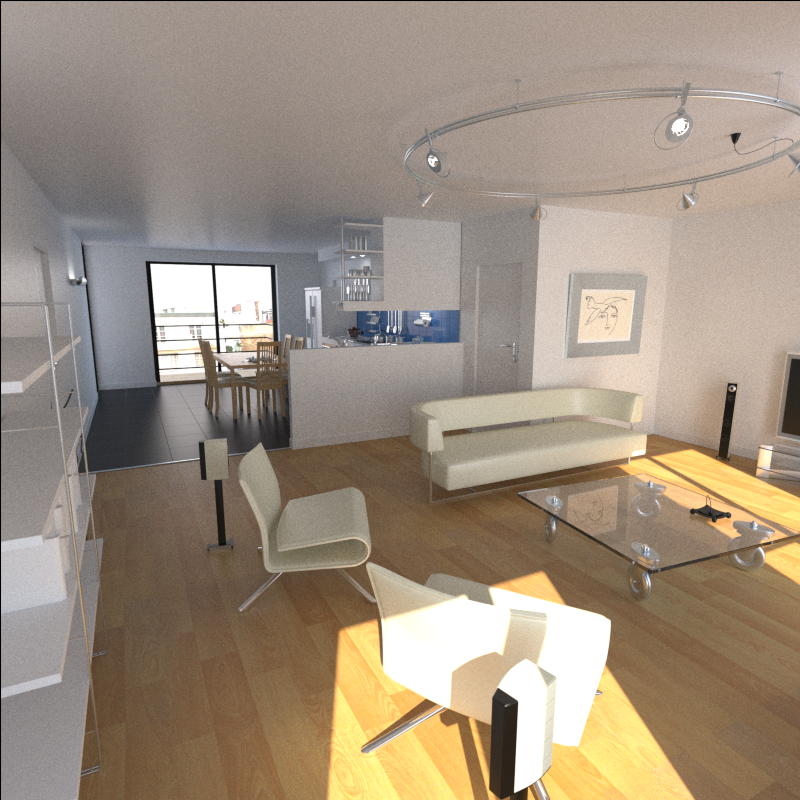

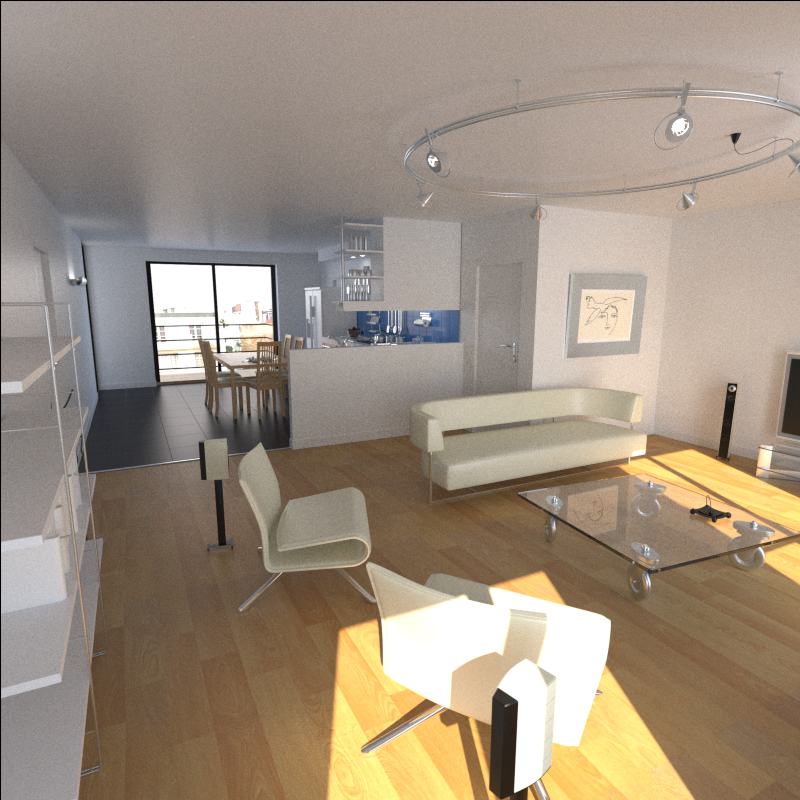

img_train/final/appartAopt_00850.png

BIN

img_train/final/appartAopt_00860.png

BIN

img_train/final/appartAopt_00870.png

BIN

img_train/final/appartAopt_00880.png

BIN

img_train/final/appartAopt_00890.png

BIN

img_train/final/appartAopt_00900.png

BIN

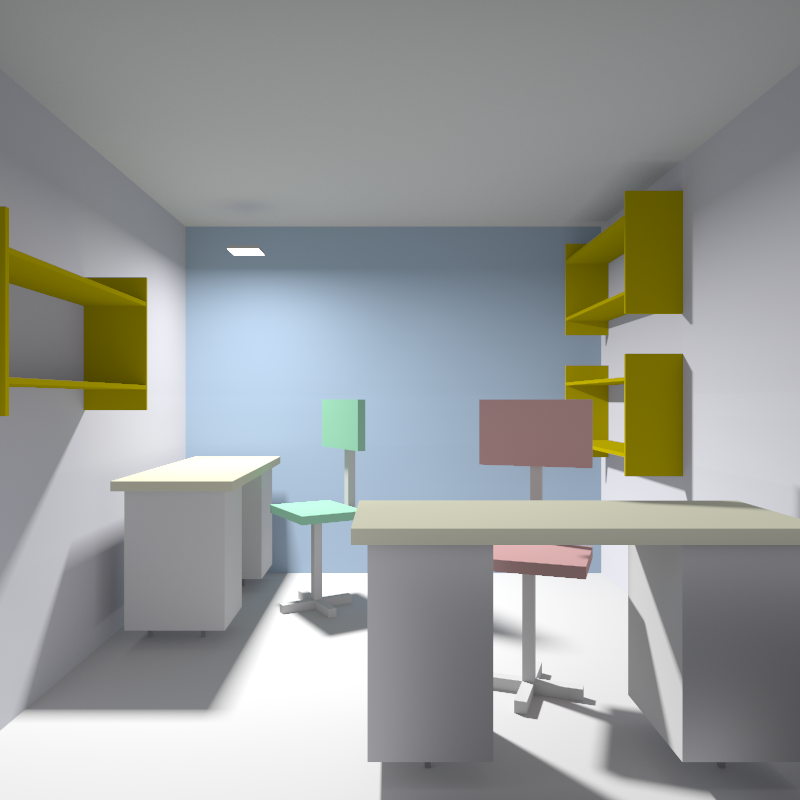

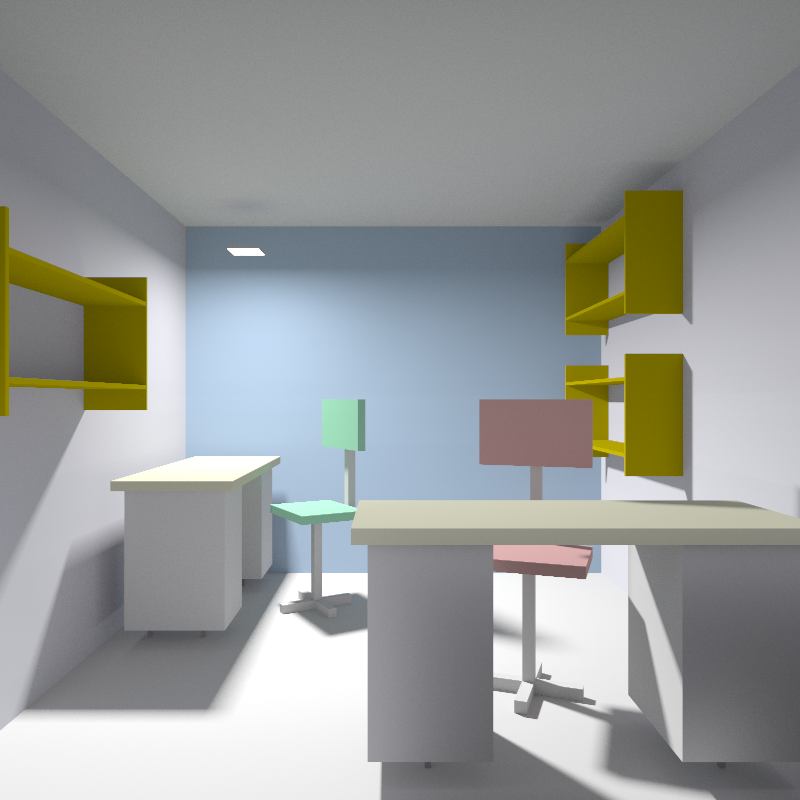

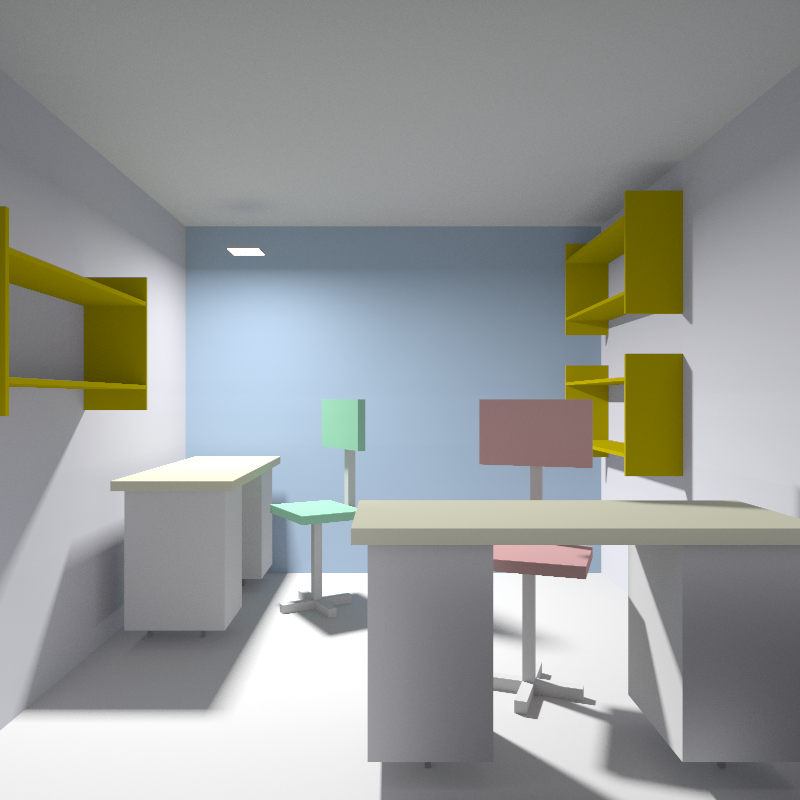

img_train/final/bureau1_9700.png

BIN

img_train/final/bureau1_9750.png

BIN

img_train/final/bureau1_9800.png

BIN

img_train/final/bureau1_9850.png

BIN

img_train/final/bureau1_9900.png

BIN

img_train/final/bureau1_9950.png

BIN

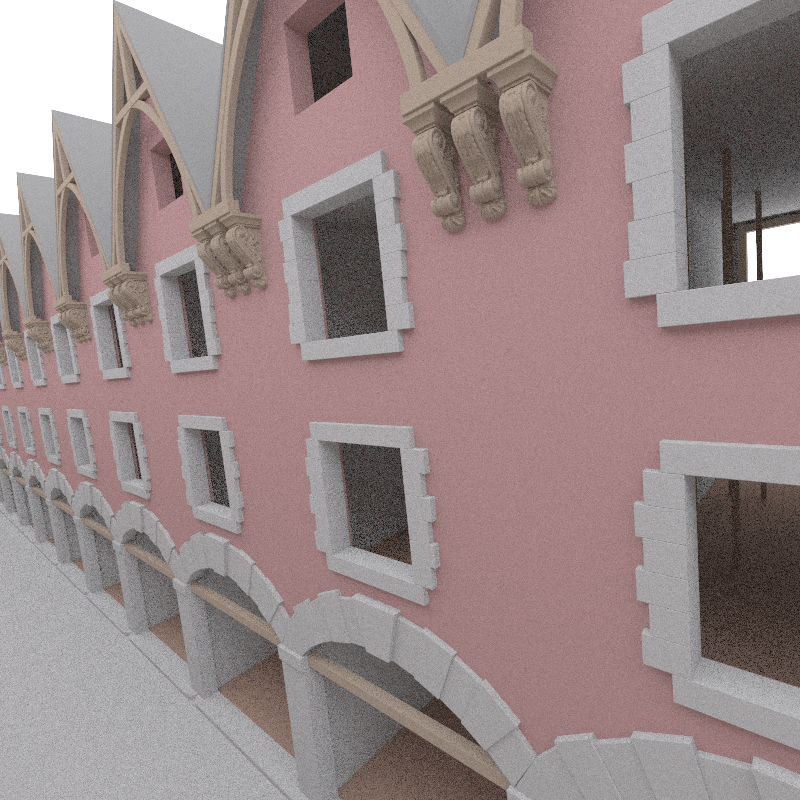

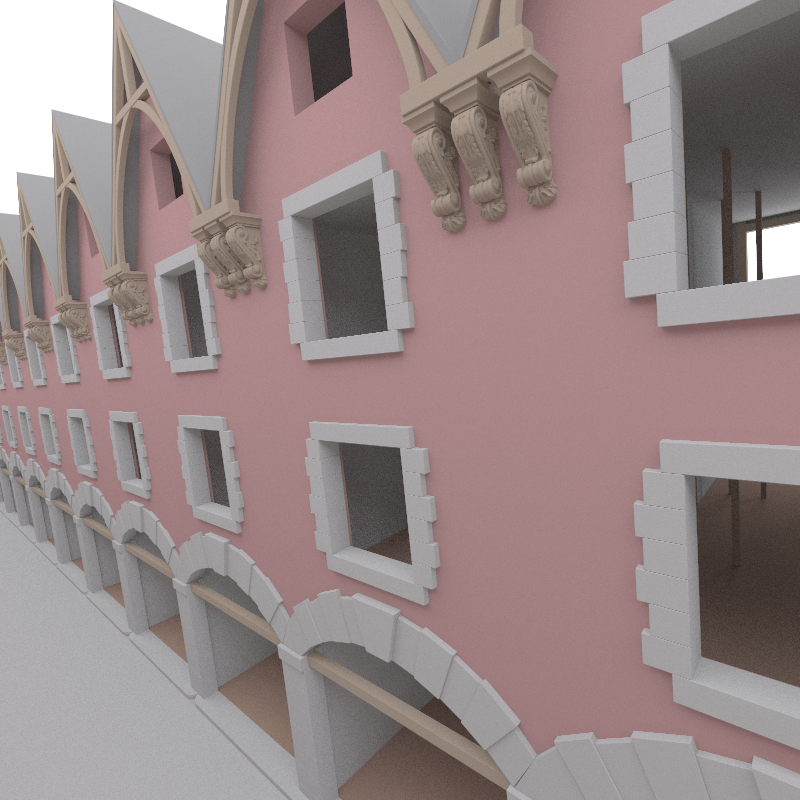

img_train/final/cendrierIUT2_01180.png

BIN

img_train/final/cendrierIUT2_01240.png

BIN

img_train/final/cendrierIUT2_01300.png

BIN

img_train/final/cendrierIUT2_01360.png

BIN

img_train/final/cendrierIUT2_01420.png

BIN

img_train/final/cendrierIUT2_01480.png

BIN

img_train/final/cuisine01_01150.png

BIN

img_train/final/cuisine01_01160.png

BIN

img_train/final/cuisine01_01170.png

BIN

img_train/final/cuisine01_01180.png

BIN

img_train/final/cuisine01_01190.png

BIN

img_train/final/cuisine01_01200.png

BIN

img_train/final/echecs09750.png

BIN

img_train/final/echecs09800.png

BIN

img_train/final/echecs09850.png

BIN

img_train/final/echecs09900.png

BIN

img_train/final/echecs09950.png

BIN

img_train/final/echecs10000.png

BIN

img_train/final/pnd_39750.png

BIN

img_train/final/pnd_39800.png

BIN

img_train/final/pnd_39850.png

BIN

img_train/final/pnd_39900.png

BIN

img_train/final/pnd_39950.png

BIN

img_train/final/pnd_40000.png

BIN

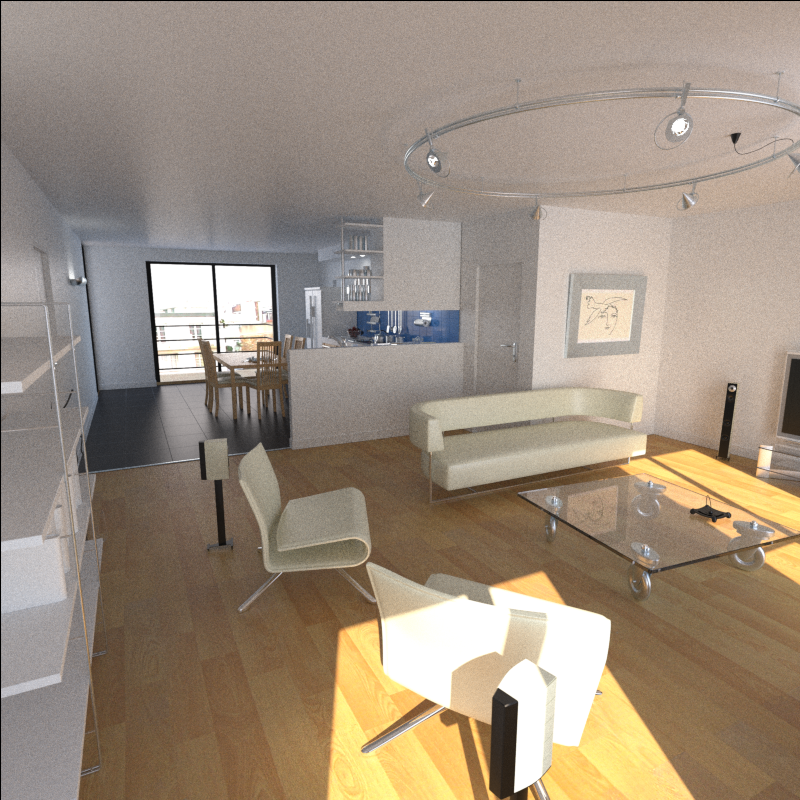

img_train/noisy/appartAopt_00070.png

BIN

img_train/noisy/appartAopt_00080.png

BIN

img_train/noisy/appartAopt_00090.png

BIN

img_train/noisy/appartAopt_00100.png

BIN

img_train/noisy/appartAopt_00110.png

BIN

img_train/noisy/appartAopt_00120.png

BIN

img_train/noisy/bureau1_100.png

BIN

img_train/noisy/bureau1_1000.png

BIN

img_train/noisy/bureau1_1050.png

BIN

img_train/noisy/bureau1_1100.png

BIN

img_train/noisy/bureau1_1150.png

BIN

img_train/noisy/bureau1_1250.png

BIN

img_train/noisy/cendrierIUT2_00040.png

BIN

img_train/noisy/cendrierIUT2_00100.png

BIN

img_train/noisy/cendrierIUT2_00160.png

BIN

img_train/noisy/cendrierIUT2_00220.png

BIN

img_train/noisy/cendrierIUT2_00280.png

BIN

img_train/noisy/cendrierIUT2_00340.png

BIN

img_train/noisy/cuisine01_00050.png

BIN

img_train/noisy/cuisine01_00060.png

BIN

img_train/noisy/cuisine01_00070.png

BIN

img_train/noisy/cuisine01_00080.png

BIN

img_train/noisy/cuisine01_00090.png

BIN

img_train/noisy/cuisine01_00100.png

BIN

img_train/noisy/echecs00050.png

BIN

img_train/noisy/echecs00100.png

BIN

img_train/noisy/echecs00150.png

BIN

img_train/noisy/echecs00200.png

BIN

img_train/noisy/echecs00250.png

BIN

img_train/noisy/echecs00300.png

BIN

img_train/noisy/pnd_100.png

BIN

img_train/noisy/pnd_1000.png

BIN

img_train/noisy/pnd_1050.png

BIN

img_train/noisy/pnd_1150.png

BIN

img_train/noisy/pnd_1200.png

BIN

img_train/noisy/pnd_1300.png

BIN

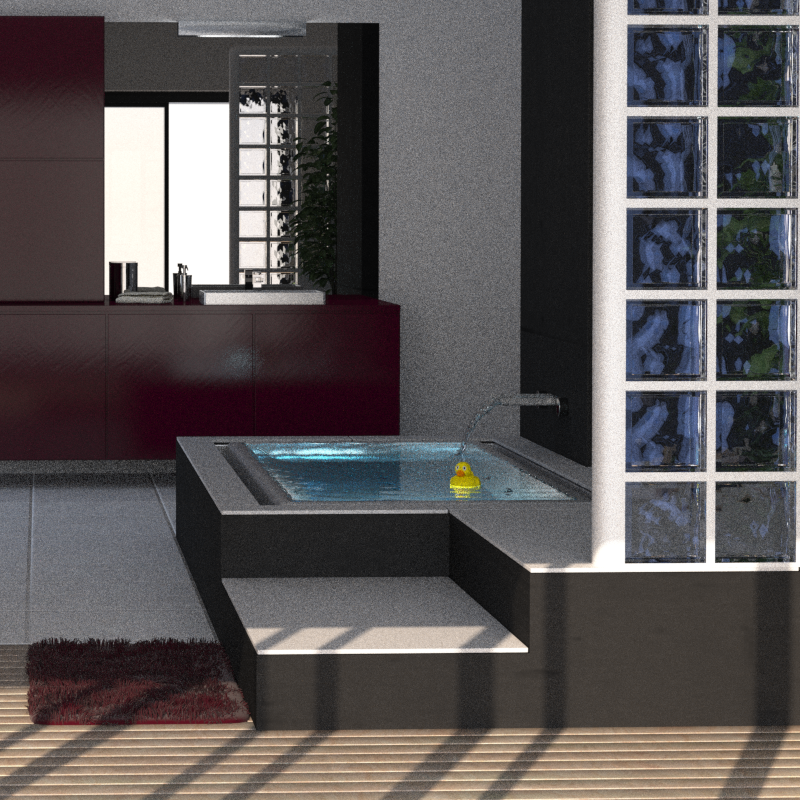

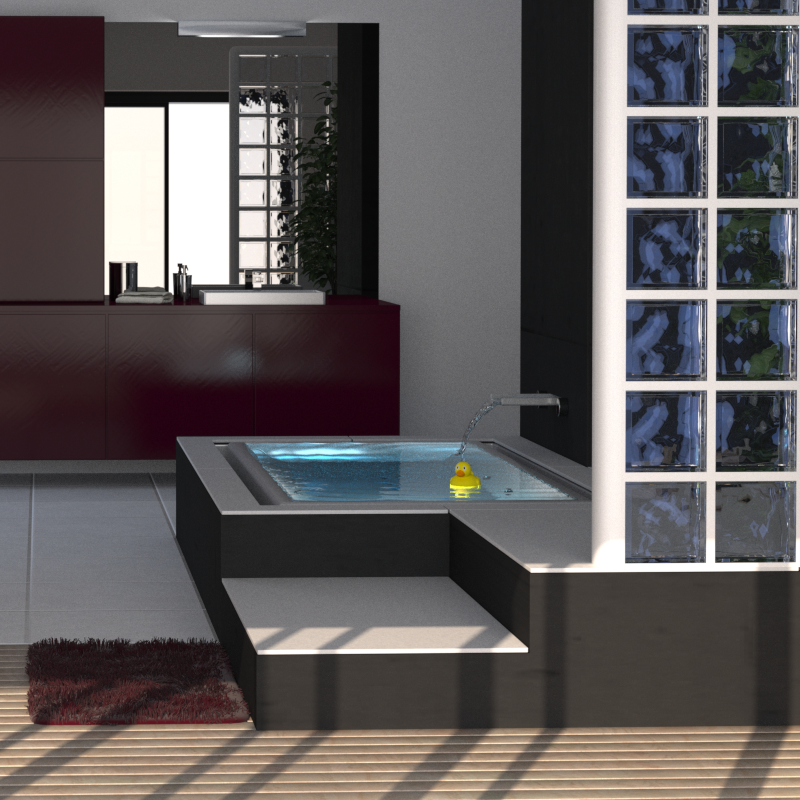

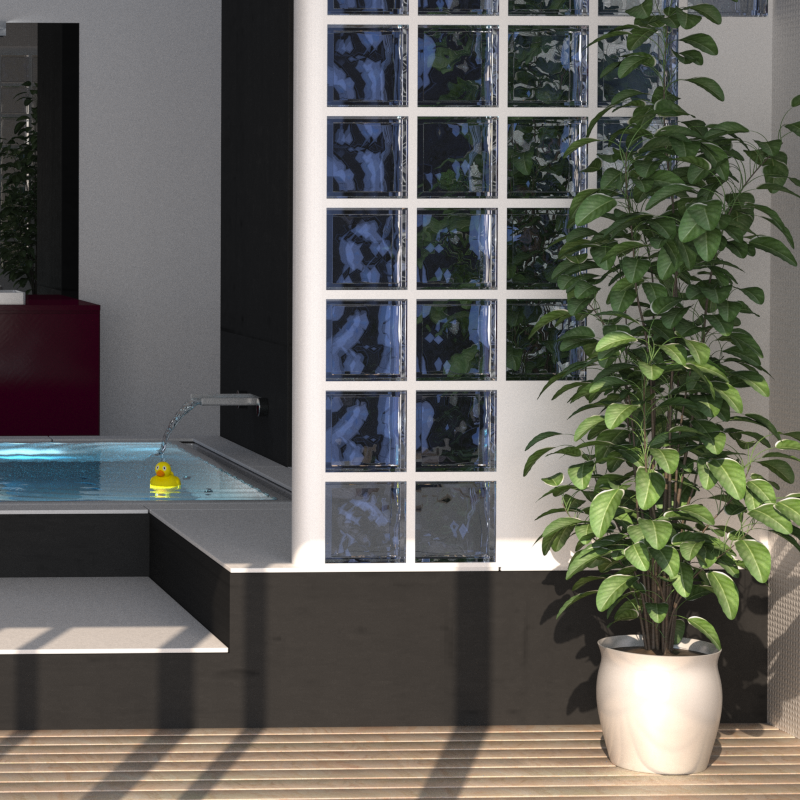

img_validation/final/SdB2_00900.png

BIN

img_validation/final/SdB2_00910.png

BIN

img_validation/final/SdB2_00920.png

BIN

img_validation/final/SdB2_00930.png

BIN

img_validation/final/SdB2_00940.png

BIN

img_validation/final/SdB2_00950.png

BIN

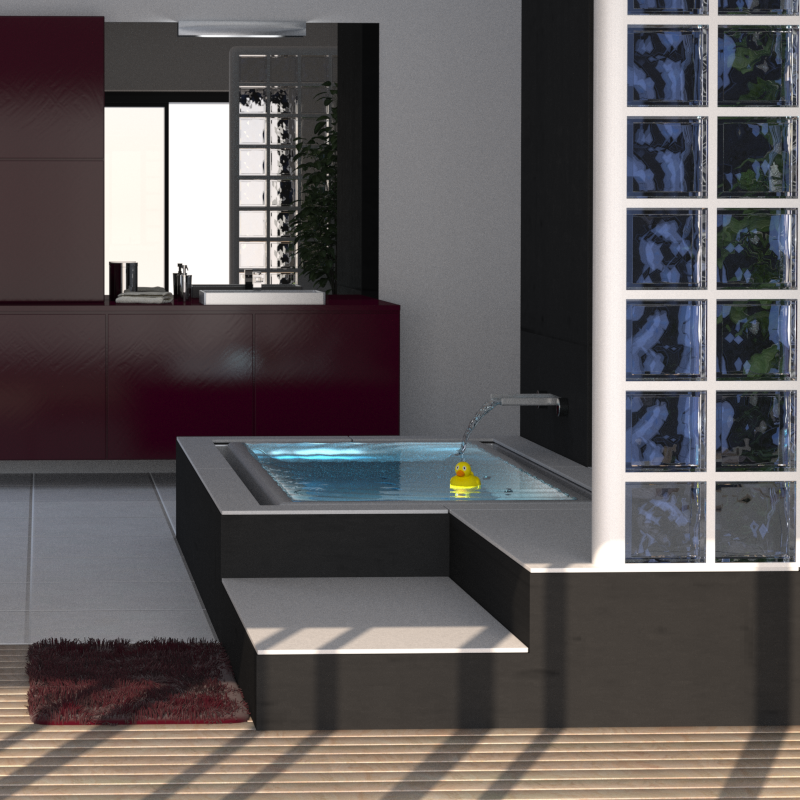

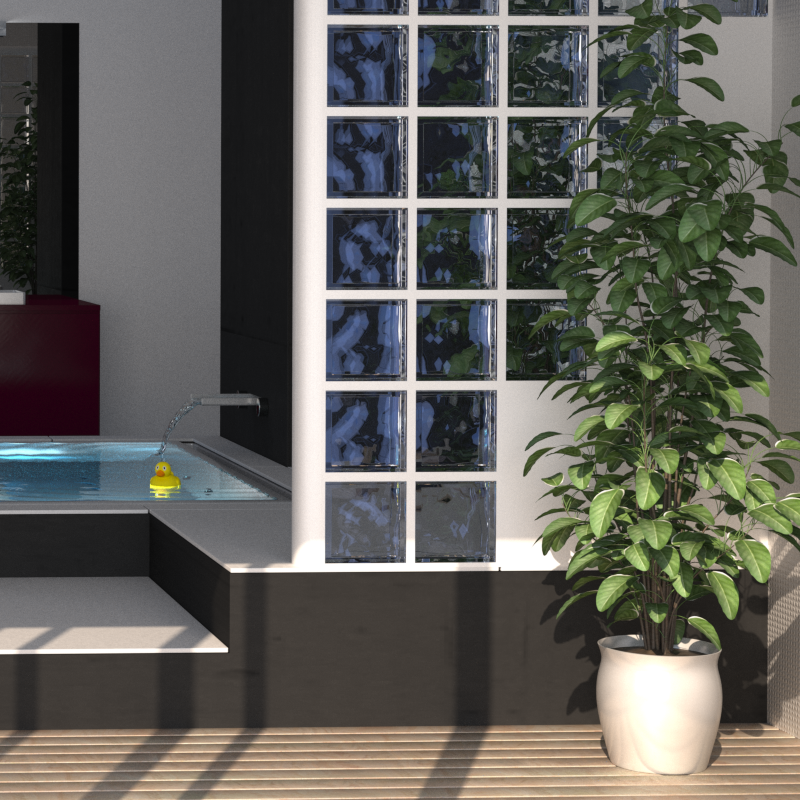

img_validation/final/SdB2_D_00900.png

BIN

img_validation/final/SdB2_D_00910.png

BIN

img_validation/final/SdB2_D_00920.png

BIN

img_validation/final/SdB2_D_00930.png

BIN

img_validation/final/SdB2_D_00940.png

BIN

img_validation/final/SdB2_D_00950.png

BIN

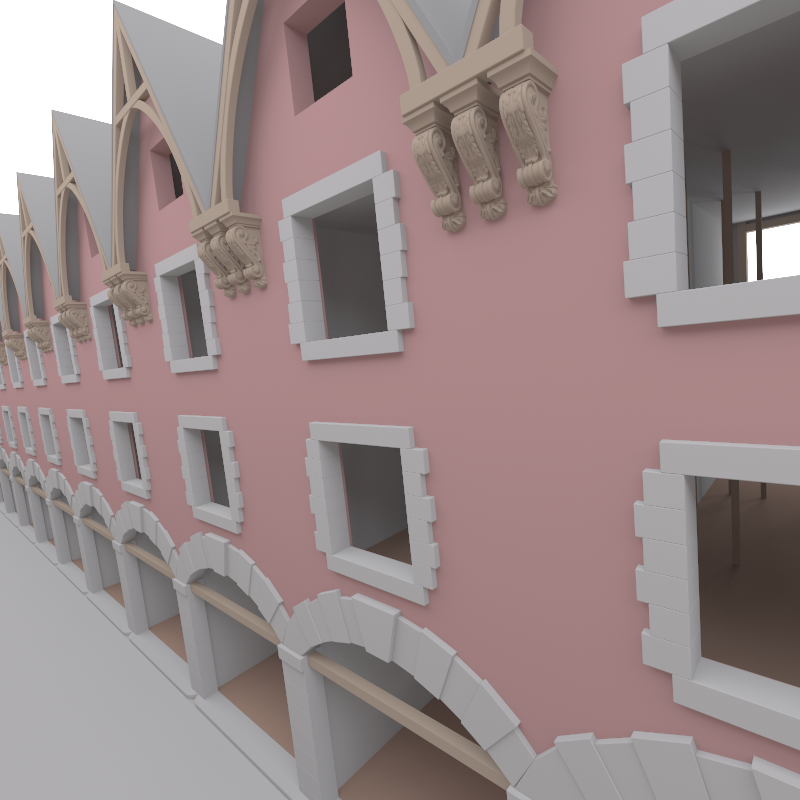

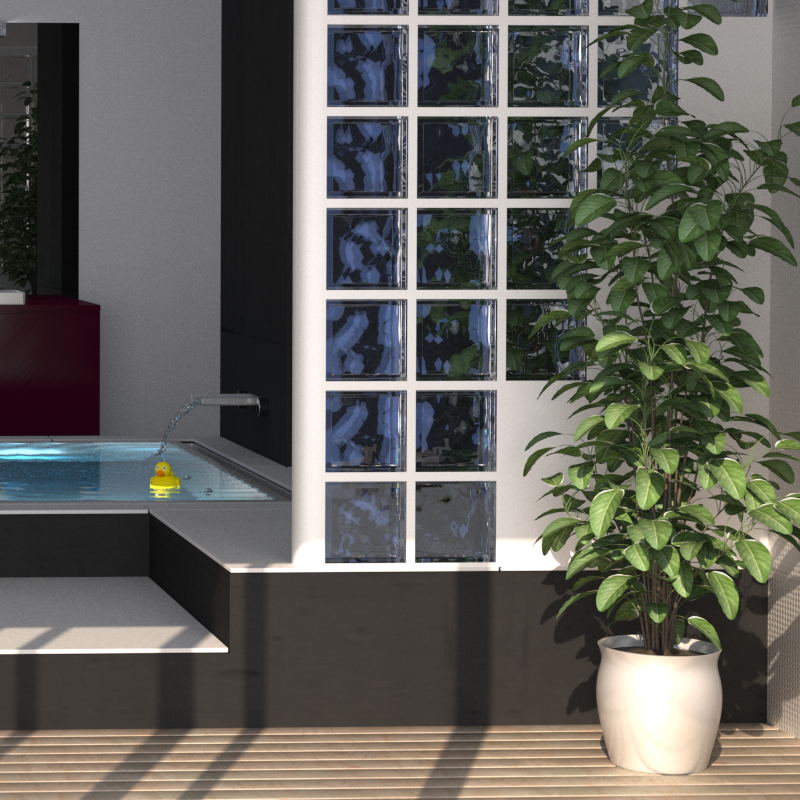

img_validation/final/selles_envir02850.png

BIN

img_validation/final/selles_envir02900.png

BIN

img_validation/final/selles_envir02950.png

BIN

img_validation/final/selles_envir03000.png

BIN

img_validation/final/selles_envir03050.png

BIN

img_validation/final/selles_envir03100.png

BIN

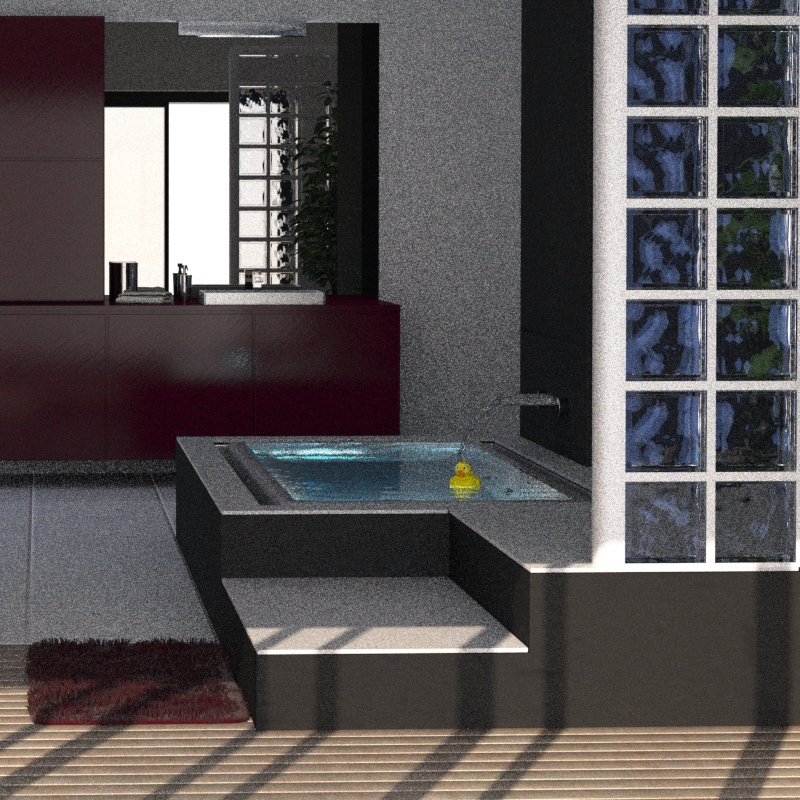

img_validation/noisy/SdB2_00020.png

BIN

img_validation/noisy/SdB2_00030.png

BIN

img_validation/noisy/SdB2_00040.png

BIN

img_validation/noisy/SdB2_00050.png

+ 0

- 0

img_validation/noisy/SdB2_00060.png